Orchestrate

.procfwk

A cross tenant metadata driven processing framework for Azure Data Factory and Azure Synapse Analytics achieved by coupling orchestration pipelines with a SQL database and a set of Azure Functions.

- Overview

- Contents

Azure Data Factory

« Contents / Orchestrators / Orchestrator Types

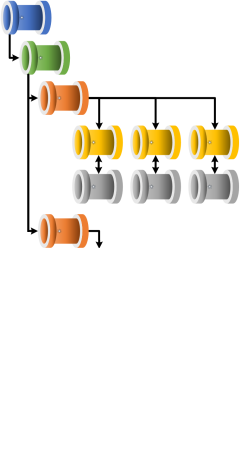

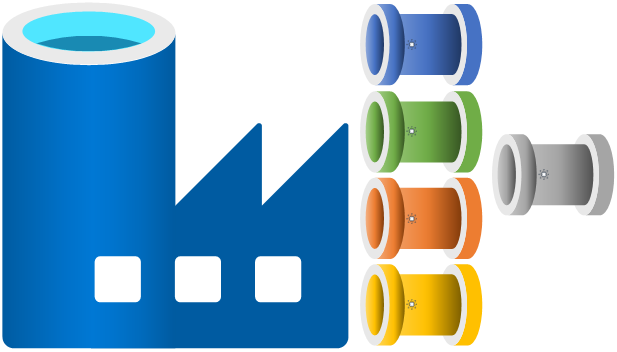

Azure Data Factory is one of the primary resources within the processing framework solution used for delivering both the execution of the orchestration pipelines (Grandparent, Parent, Child, Infant, Utilities) and used to deliver worker pipelines created as modules outside of the processing solution.

Azure Data Factory is one of the primary resources within the processing framework solution used for delivering both the execution of the orchestration pipelines (Grandparent, Parent, Child, Infant, Utilities) and used to deliver worker pipelines created as modules outside of the processing solution.

In the context of the processing framework Data Factory operates at the control flow level as one of the orchestrators available for a given solution. Data flow level transformtion operations are not handled within the framework. It is expected that dataset level tasks are delivered by the worker pipelines that this framework triggers.

Data Factory uses the following common components to deliver the framework execution runs.